My teacher will be an AI (maybe)

25 June, 2018

An important distinction in the field of Artificial Intelligence (AI) is between weak (or narrow) AI and strong (or general) AI, the later referring to artificial general intelligence, or AGI. The former consists of a computer program that uses AI techniques (machine learning, deep learning) and is designed to solve a specific problem (from playing chess to detecting pedestrians and obstacles in the road). AGI, however, refers to machines with the ability to solve many types of problems on their own just like humans do; in other words, they can understand their environment and reason like a human. All the applications of AI that we have seen up until now are examples of narrow AI.

Even though strong or general AI is currently a research subject, it’s still probable that it will take a few decades to become a reality (and don’t forget that we’ve spent sixty years saying it’ll happen in the next twenty). For the time being, we only find AGI in sci-fi literature and films, although in recent years we’ve seen some very significant advances in AI techniques that allow us to consider a viable AGI: maybe now we could say it’ll happen in a couple of decades.

So, what if we applied this AGI to education? What should a bot/assistant or AGI devoted to learning be like? Taking what we can already do now (with chatbots and machine learning) as a starting point, and while we’re travelling along this path, we’re going to come across lots of questions about AGI that we’ll need to answer as we add advances to our model. Who knows? Maybe in 20 years, our teacher will be AI…

Thoughts on appearance, personality and teaching attributes

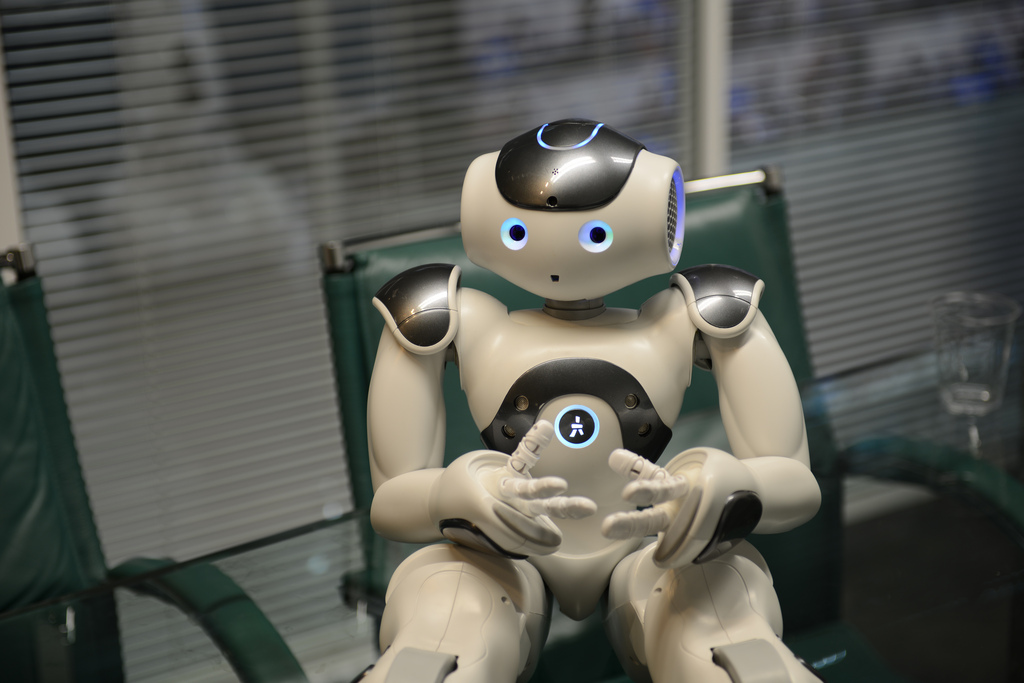

It will be very important to decide the degree of anthropomorphism (tendency of considering non-human realities or elements as though they were human) that we want for our teaching assistant. Should we give them human qualities or characteristics, like a name? How will we decide on what they’ll look like (not just their visual appearance but their voice or accent too, if they speak)? Will they have a physical appearance (robotic) or merely a virtual one and do they need to be given a back story? In fact, anthropomorphism would probably help with communication and ergonomics. We also have to think about whether there will be just one assistant for everyone or a specific one for each student, or a common one for each subject.

It may seem rather banal, but we already call Siri and Alexa by their names. At the moment, we don’t do that with a car satnav (although maybe some people do), but the lines are blurred. When the assistant becomes someone we talk to and ceases to be a simple tool, that’s when we’ll need to think about these things.

The debate on the level of “humanity” that we need to give it and what relationship it will establish with the student will be interesting. We’ll be able to simulate a lot of human characteristics in terms of personality, but we’ll have to see if we simulate a sense of humour or not, as well as sensitivity, empathy, assertiveness and a whole lot more. In theory it will also be able to detect the student’s frame of mind and act accordingly. Besides that, we also need to think of whether it will be time-aware of past sessions.

I also wonder if our virtual teacher will limit itself to answering queries (and thus be a tool) or if it will be proactive and give advice on how to do the tasks, remind students of submission dates and ensure their success. Will it be like a teaching coach? Perhaps we need to place limits on the help it provides to stop the student from relying too much on it… And will we be able to change the settings? Some students may feel happier with a virtual teacher with a specific personality or that uses a certain tone of voice.

And finally, as regards the bot’s level of “wisdom”, what subjects will it know? Just the ones in the official university curriculum? Or will it be open to external resources (like Wikipedia or other internet sources)?

Ethical considerations

Consideration 1. Honesty and transparency

Is it fair to trick the students and not tell them that the teaching assistant is AI, like Jill Watson, the well-known Georgia Tech case, or the recent example of Google Duplex, where the hairdresser’s or the restaurant supposedly don’t know that the customer is a machine? Or is it preferable to say clearly that it’s a human-machine team? I think the second option is better and fairer.

Consideration 2. Extreme anthropomorphism and the “uncanny valley”

Connected to the previous consideration is another matter to take into account. The uncanny valley is a hypothesis about robotics that says that when a robot appears to be human, the emotional response of humans to the robot will become increasingly more positive and empathetic up to a point. Beyond this point, the response changes and turns into repugnance. After this, if the appearance becomes even more human, we then return to high levels of empathy. In other words, we have to humanize the robot but only up to a point, making sure it doesn’t create fear or anxiety, or we have to do the opposite – we have to decide to make it virtually indistinguishable from a human.

Consideration 3. Bias due to incorrect training of machines

The teaching responses of the AI bot may be incorrect because we’ve trained it with data that may be incorrect, such as previous answers given by other students (in debates), previous interactions with the student or material from the internet that hasn’t been validated. I’m a firm believer that the human expert has to be present in some way in the process to validate the training data. We need to have a “human in the loop” (HITL) to ensure that there’s no bias and that what happened with Tay, the Microsoft chatbot that turned racist, doesn’t happen again.

Consideration 4. The final objective of the machine

As is the case with autonomous cars (which, in the hypothetical event of an accident, may decide who lives and who dies), the final (programmed) objectives of the AI bot could be varied and even contradictory:

- The objective may be for the student to learn (and so run the risk of the AI bot setting difficult and very challenging activities that could lead to the student failing).

- Or it could be for the student to pass (and then there’s the danger that the AI bot sets tests that are too easy, suggests the answers and makes passing the course too easy and the student doesn’t learn).

- The objective may even be for the student to enrol on a lot of courses (and then perhaps the AI bot doesn’t provide realistic information about the student’s ability to take a lot of courses and hides potential difficulties in passing the course).

We see that each stakeholder in the teaching process (teachers, students, marketing, finance departments, society, job market, etc) may have opposing objectives. In this case, we need to put “society in the loop” and draw up an educational social contract.

Conclusion

Maybe we need to wait a bit for it to be possible to have an artificial assistant with all the AGI functions in place, but in the meantime, we think it’s worth starting to consider all of these questions; some of the answers can already be rolled out right now, given the current state of the technology.

And with the gaze focused on the famous 20-year horizon, we also have to start thinking as a society about the role that AI will play in education, the implications of AGI, and the role that people and society are to play in it all.

*(Heading picture credits: Nao Robot by steveonjava at Flickr)