Abel Gómez: “Model-driven engineering makes it possible to democratize software development”

10 February, 2022

Abel Gómez is a researcher in the Internet Interdisciplinary Institute‘s (IN3) Systems, Software and Models (SOM) Research Lab, and a member of the UOC’s Faculty of Computer Science, Multimedia and Telecommunications. In this interview, he talks about the group’s main lines of research and, above all, about model-driven engineering—a discipline that has been on the rise in recent years. He also discusses the group’s involvement in two European projects focused on cyber-physical systems, which are increasingly present in our everyday lives thanks to the Internet of Things and Industry 4.0.

The group’s main interest is model-driven engineering. Could you give a brief definition of what it is?

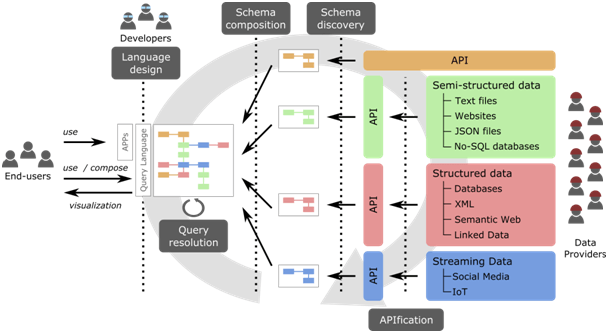

Computer Science and Information Technology are generally about automating processes that used to be done manually and repetitively. Model-driven engineering (MDE) consists of developing models or, in other words, an abstract description or specification of a program to automatically generate part of the software, which is usually the most tedious part of producing software.

Why do you think it’s important?

Model-driven engineering has become a facility within software development. The low-code and no-code solutions that are so fashionable today are still essentially MDE solutions aimed at non-experts. You could say that model-driven engineering makes it possible to democratize software development and vastly enhance productivity: many components in software development are very tedious to create, and such creation can be automated, which saves money and time. Furthermore, when the software has been generated automatically and you detect a problem, you can modify the automatic generation process so that the bug will never appear again.

What other lines of research are you working on?

We’re also involved in software analysis, which consists of using different tools to examine software and learn more about it: whether or not it is high quality, what will be its performance, etc. Another research line is based on formal methods, which aim to apply foundations that are mathematical, and therefore verifiable, to confirm whether software behaves according to specifications.

How is artificial intelligence (AI) involved in your research?

We’re not working on developing new AI methods, but instead on taking advantage of existing algorithms and techniques to improve the software development process. In that respect, we’re working on LOCOSS, a project in which we’re trying to make it easier for developers who are not experts in AI to include AI techniques and components that enable them to create intelligent software. To do this, we need to categorize all the possible intelligent applications and have a repository of AI components.

You recently began a project called AIDOaRt with 32 partners from all over Europe. What does this involve?

AIDOaRt is the evolution of a project called MegaM@Rt2, which aimed to provide a framework for the continuous development and deployment of complex systems using scalable model-driven engineering techniques. The goal of the entire AIDOaRt consortium, which includes partners from academia but, above all, from industry, is to use artificial intelligence tools to improve this process of continuous development and deployment of cyber-physical systems.

What is this process of continuous development and deployment, and why is it important?

There are many phases in a software development process: design, programming, software validation, cloud deployment, etc. The objective of a continuous development and deployment process is to have tools that cover all these phases—from the design of the software to its deployment—that have an overview of the entire process. Considering the process as a whole allows us to monitor it and transfer the information obtained from the software while it is running to the design and development stages. In other words, after you’ve created the software, you’ve deployed it, and started running it, you need tools that enable you to check whether something is not working well and to modify it at the starting point, thereby improving the process iteratively.

What is the role of Artificial Intelligence in this process?

When you have a very large piece of software that is deployed and running, this performance validation and analysis process is highly complex, and it’s difficult to determine the factors that are leading the software to fail. With AI you can create a model that tells you that, when a series of conditions arise during the monitoring, it’s because you have a problem at a specific point. These techniques allow you to identify these error conditions more easily based on the monitoring information. Artificial intelligence can also be applied in the modelling or coding phase, using an AI component that helps the developer by automatically generating parts of the software. Another option is to develop an algorithm that predicts when you’re running out of resources, such as memory or computing power, so that the developer can take action.

What do AIDOaRt’s cyber-physical systems consist of?

They’re systems in which the software part and the physical part are inherently intertwined with each other so that they run properly, like an industrial robot, an automated robotic arm, an autonomous pallet transporter, etc. These systems are very important in what is known as industry 4.0, but practically almost all the systems that are related to the Internet of Things are cyber-physical systems as well: all the systems that you might find in a smart city, including sensors to measure air quality, traffic, or meteorological sensors, have a very important software part and could benefit from the results of the AIDOaRt project.

What is the challenge involved in working with these systems?

Developing the software for one sensor can be simple, but doing it for a system with thousands of sensors sending data in a city is a very complex undertaking. Deployment is also difficult: you can have sensors that send data every few seconds, the software has to respond in a limited time, the networks have to work properly, the entire system has to be updated at the same time, etc. Furthermore, this is not a problem that will arise five years from now. It’s already here, and we don’t have any standardized guide as to how we should implement all these processes.

Another European project that is also related to cyber-physical systems is TRANSACT. What does it consist of?

TRANSACT is also a project with a European consortium of 32 members which is part of the same ECSEL call as AIDOaRt, but in this case, it is led by industry. The objective is to be able to redesign the architectures of cyber-physical systems that usually work at a local level, such as a CT machine in a hospital, or a wastewater treatment plant, so that they can take better advantage of cloud and edge computing.

One of the key aspects is that it deals with “critical” systems.

Yes, the project focuses on systems that are critical to people’s security because of the privacy of the data they handle, or because of the impact that a failure in the system can have on the environment, to name just a couple of examples. These systems have various problems. On the one hand, migration to the cloud has its risks in terms of data privacy. This is where the edge would come in, as it would act as an intermediate layer. The edge could be considered a “mini cloud” that would be in the institution’s data centre, which would allow for lower latency due to being closer, as well as providing more control over data security. However, critical systems like those in hospitals and wastewater treatment plants have often not been designed to work with the cloud or the edge. For this reason, at TRANSACT we aim to be able to apply the potential of these computing resources to already existing systems, and to provide a series of guides and tools that enable these critical systems to be adapted and modernized.

What most appeals to you about MDE research?

Although I started out in software engineering research almost by chance, what has always attracted me to model-driven engineering is the fact that it can be applied to any branch of computer science. In fact, I’ve worked with MDE in many areas: formal methods, bioinformatics, software product lines, document product lines, automated document generation, performance analysis to predict how systems work, cyber-physical systems, etc. Ultimately, it’s all about creating models that make life easier for programmers.