Chatbots in Higher Education (Part I)

11 March, 2019

Part I

In this post we’ll be talking about chatbots, but not to explain what a chatbot is or how you make one. We want to offer an assessment from the point of view of an institution that is beginning to explore the world. There’s an impetus in higher education to roll out the use of chatbots as a means of solving student queries. For example, at the last EXPOELEARNING event in Madrid, which some members from the eLearn Center attended, there was a panel of experts devoted to this issue.

Generally, when we talk about the “use of chatbots as a student help channel”, what we really should be saying is “we want to use chatbots as a means of communication between university students and a series of developing technologies that we call, as a whole, artificial intelligence”.

This sentence includes two of the three most relevant concepts of this post: chatbot and artificial intelligence (which we’ll call AI from now on). The third, which will come up in the second part of this post, is personal assistant.

Chatbots

In truth, chatbots are nothing more than a communication channel between people and machines. Artificial intelligence is nothing new; it’s just another form of machine consciousness. So far, it’s a series of partially developed technologies that, as they develop, will emerge from this well of potential that is AI, to carve out their own name. In a few years’ time, no one will say that tone analysis of a conversation is AI, in the same way that no one today would call a pen an ICT tool. In fact, there are chatbots that do not use AI, most certainly because the technologies that they use have already been assimilated under their own name.

What does using a chatbot mean? It means giving an order to a machine, the machine executes the task we ask it to do, and it replies to us. It’s not that different from what we have now. In what way is a chatbot revolutionary? Well, it’s revolutionary because it can imitate the way a person talks. When we say “talk”, we mean “chat”, writing words on a screen in the form of a conversation, although some chatbots can understand our voice using a speech-to-text program and reply to us with a synthetic voice using text-to-speech.

People have always been fascinated at the idea of machines being able to imitate us. But as fun as that is, it will only keep us entertained for a while. After that, the chatbot will only make sense if it’s genuinely useful.

Behind these chatbots there is a huge network of questions and answers: the user asks, the chatbot answers. As we said, it’s not that revolutionary, instead it’s a huge database of FAQs accompanied by a search engine with a few conversation formulae.

This basis can be made more sophisticated in various ways. It can be made so that some of the chatbot’s answers include a question to the user, and from here, build up a network of questions and answers that can seem more or less like a real conversation.

Another way of making it more sophisticated is by applying some of the technologies from this aforementioned AI “well”, primarily NLP (Natural Language Processing) and ML (Machine Learning). These technologies are the ones that make the chatbot seem “intelligent”. Instead of running a search through a list of FAQs, the chatbot analyses the user’s questions to work out the intention, the important parts or elements, the user’s tone, or even the user’s personality, and then give them the suitable prefabricated answer.

There are some that can go even further and that improve with use. The more people use them, the better the answers they give because they “learn” from their mistakes.

Finally, one step further towards chatbot sophistication is that it can recognise its user, their personal details, their previous conversations, and other sources of information about them by accessing databases containing this information.

Opportunities for chatbots

Right now, we aren’t considering putting a chatbot on a real, physical help desk. Instead, we’re thinking of chatbots that respond from a virtual environment. In on-site universities, the majority of the questions that they receive online are about enrolments, grants and other administrative matters. That’s why one of the first applications of chatbots has been to answer these types of question.

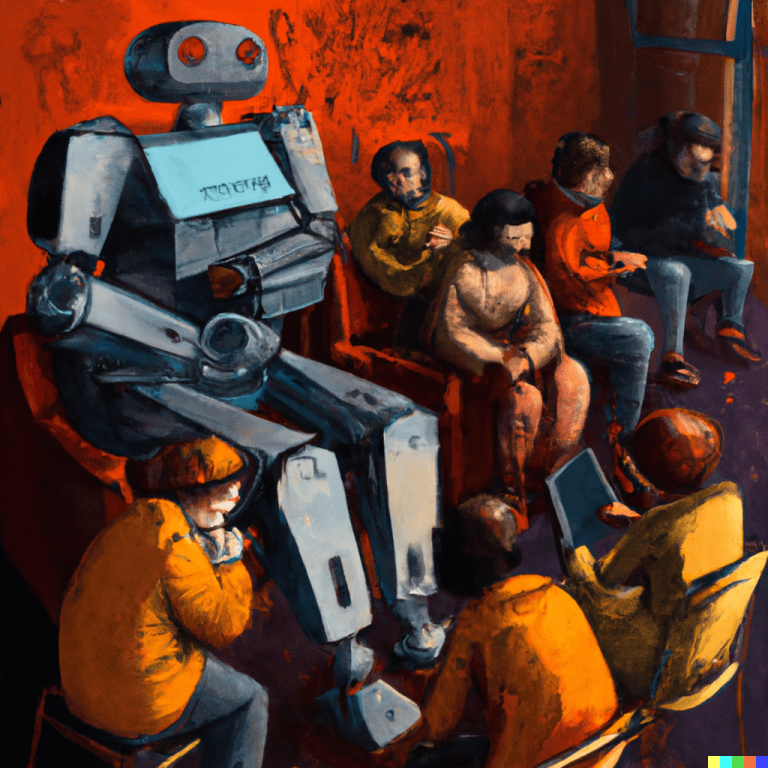

This assistant is featured in the film Elysium

Many of these questions can be answered with information that is already on the University’s website. To fine-tune the chatbot, the University will have to gather a register of questions and answers and maintain them to keep the chatbot up to date and under constant improvement. Therefore, the chatbot won’t be much more than a search engine for a list of FAQs.

Virtual universities have the possibility of going further and making chatbots answer questions regarding teaching and about course content. At the UOC, communication between students and professors takes place via forums. Gathering all the questions and answers that are made using this tool and turning them into a list of FAQs requires effort because the information isn’t structured. What would be needed, therefore, is an initial effort to imbue all of this information with a structure and start gathering data in a valid way.

From here, we can build a chatbot that answers a significant percentage of these questions. The cooperation between professor and chatbot could lead to an optimum combination of immediacy and quality of response, releasing the teacher from more mechanical tasks or repetitive and administrative communication, which would allow them to spend time on more beneficial tasks. One of these tasks would be to “train” the chatbot constantly with new questions and answers so that it would be up to date and increasingly more efficient.

Conclusions

We will shortly be publishing the continuation of this post with a second one, where we will publish the conclusions to the whole text.

For further information about this topic you can read this article about the Briefing paper: chatbots in education.

Header photo by Philipp Katzenberger on Unsplash